Nouveauté

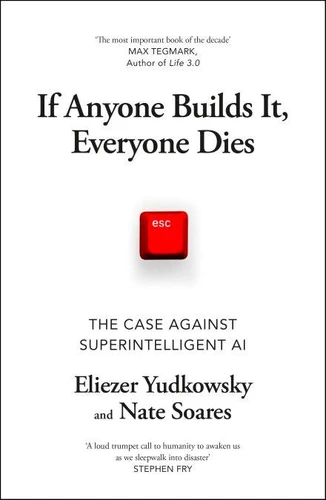

If Anyone Builds It, Everyone Dies. The Case Against Superintelligent AI

Par : ,Formats :

Disponible dans votre compte client Decitre ou Furet du Nord dès validation de votre commande. Le format ePub protégé est :

- Compatible avec une lecture sur My Vivlio (smartphone, tablette, ordinateur)

- Compatible avec une lecture sur liseuses Vivlio

- Pour les liseuses autres que Vivlio, vous devez utiliser le logiciel Adobe Digital Edition. Non compatible avec la lecture sur les liseuses Kindle, Remarkable et Sony

- Non compatible avec un achat hors France métropolitaine

, qui est-ce ?

, qui est-ce ?Notre partenaire de plateforme de lecture numérique où vous retrouverez l'ensemble de vos ebooks gratuitement

Pour en savoir plus sur nos ebooks, consultez notre aide en ligne ici

- Nombre de pages272

- FormatePub

- ISBN978-1-5299-6467-7

- EAN9781529964677

- Date de parution18/09/2025

- Protection num.Adobe DRM

- Infos supplémentairesepub

- ÉditeurVintage Digital

Résumé

AN INSTANT NEW YORK TIMES BESTSELLER'The most important book of the decade' MAX TEGMARK, author of Life 3.0'A loud trumpet call to humanity to awaken us as we sleepwalk into disaster - we must wake up' STEPHEN FRY'The best no-nonsense, simple explanation of the AI risk problem I've ever read' YISHAN WONG, former Reddit CEOAI is the greatest threat to our existence that we have ever faced. The scramble to create superhuman AI has put us on the path to extinction - but it's not too late to change course.

Two pioneering researchers in the field, Eliezer Yudkowsky and Nate Soares, explain why artificial superintelligence would be a global suicide bomb and call for an immediate halt to its development. The technology may be complex, but the facts are simple: companies and countries are in a race to build machines that will be smarter than any person, and the world is devastatingly unprepared for what will come next.

Could a machine superintelligence wipe out our entire species? Would it want to? Would it want anything at all? In this urgent book, Yudkowsky and Soares explore the theory and the evidence, present one possible extinction scenario and explain what it would take for humanity to survive. The world is racing to build something truly new - and if anyone builds it, everyone dies.** A Guardian Biggest Book of the Autumn **

Two pioneering researchers in the field, Eliezer Yudkowsky and Nate Soares, explain why artificial superintelligence would be a global suicide bomb and call for an immediate halt to its development. The technology may be complex, but the facts are simple: companies and countries are in a race to build machines that will be smarter than any person, and the world is devastatingly unprepared for what will come next.

Could a machine superintelligence wipe out our entire species? Would it want to? Would it want anything at all? In this urgent book, Yudkowsky and Soares explore the theory and the evidence, present one possible extinction scenario and explain what it would take for humanity to survive. The world is racing to build something truly new - and if anyone builds it, everyone dies.** A Guardian Biggest Book of the Autumn **

AN INSTANT NEW YORK TIMES BESTSELLER'The most important book of the decade' MAX TEGMARK, author of Life 3.0'A loud trumpet call to humanity to awaken us as we sleepwalk into disaster - we must wake up' STEPHEN FRY'The best no-nonsense, simple explanation of the AI risk problem I've ever read' YISHAN WONG, former Reddit CEOAI is the greatest threat to our existence that we have ever faced. The scramble to create superhuman AI has put us on the path to extinction - but it's not too late to change course.

Two pioneering researchers in the field, Eliezer Yudkowsky and Nate Soares, explain why artificial superintelligence would be a global suicide bomb and call for an immediate halt to its development. The technology may be complex, but the facts are simple: companies and countries are in a race to build machines that will be smarter than any person, and the world is devastatingly unprepared for what will come next.

Could a machine superintelligence wipe out our entire species? Would it want to? Would it want anything at all? In this urgent book, Yudkowsky and Soares explore the theory and the evidence, present one possible extinction scenario and explain what it would take for humanity to survive. The world is racing to build something truly new - and if anyone builds it, everyone dies.** A Guardian Biggest Book of the Autumn **

Two pioneering researchers in the field, Eliezer Yudkowsky and Nate Soares, explain why artificial superintelligence would be a global suicide bomb and call for an immediate halt to its development. The technology may be complex, but the facts are simple: companies and countries are in a race to build machines that will be smarter than any person, and the world is devastatingly unprepared for what will come next.

Could a machine superintelligence wipe out our entire species? Would it want to? Would it want anything at all? In this urgent book, Yudkowsky and Soares explore the theory and the evidence, present one possible extinction scenario and explain what it would take for humanity to survive. The world is racing to build something truly new - and if anyone builds it, everyone dies.** A Guardian Biggest Book of the Autumn **